So seeing as for CFC Prototyping I will be subverting a Google Home…I bought a google home. Its very strange, as an interface. I am incredibly aware of its presence even when I am not interacting with it. That said it does just look like a weird little speaker. So far I tinkered with its built in settings, and wrote a small example application that spits out random cat facts.

I was trying to figure out where to start, in making a google home assistant that serves something other than you, or serves itself, and I think I’m going to start with just making a Lantour Litanizer viz a viz Alien Phenomenology. Its a bit random, but its a starting point. I know I want to make something like a small alien, and random is usually a place to start.

I always feel a little conflicted about OOO, on the one hand the idea of a flat ontology is appealing, but I think I might be too rooted in being a human w/ thoughts, and feelings, and bias, to buy into it wholesale. That said, it is an interesting framework to think about things in.

I think some futures scenarios could be centred around things like Deep Blue from Hitchhikers, or The Hybrid from Battlestar. They’re both beings, but also sort of things, and they exist on a parallel but different plane.

Anyways, starting points are good.

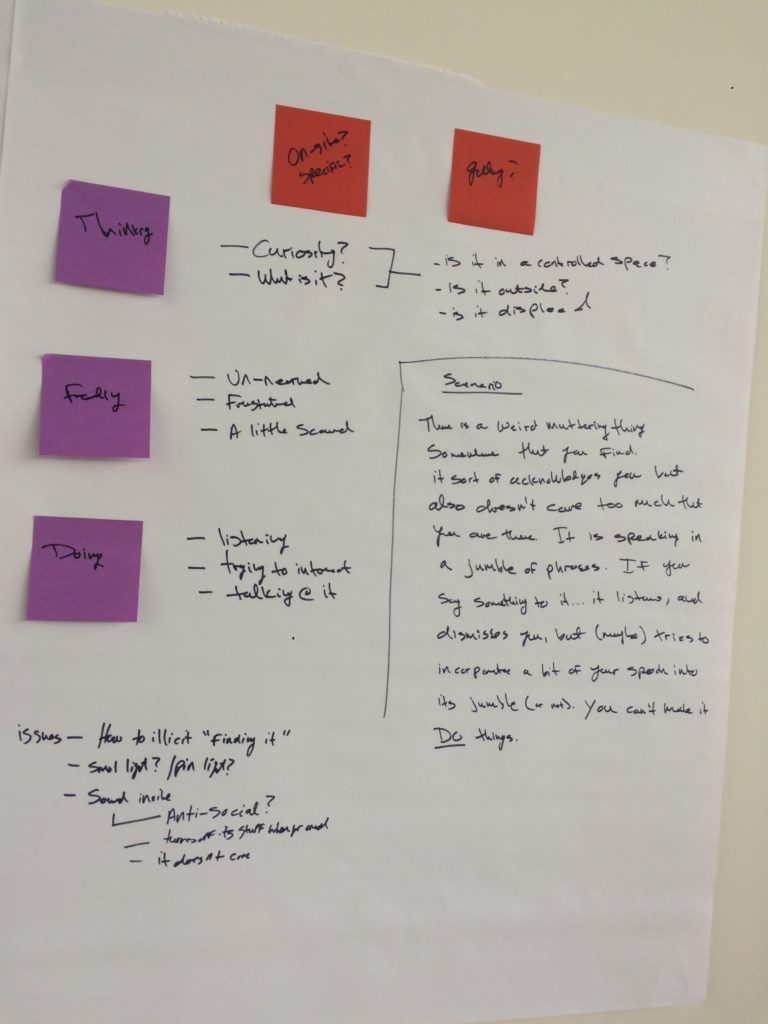

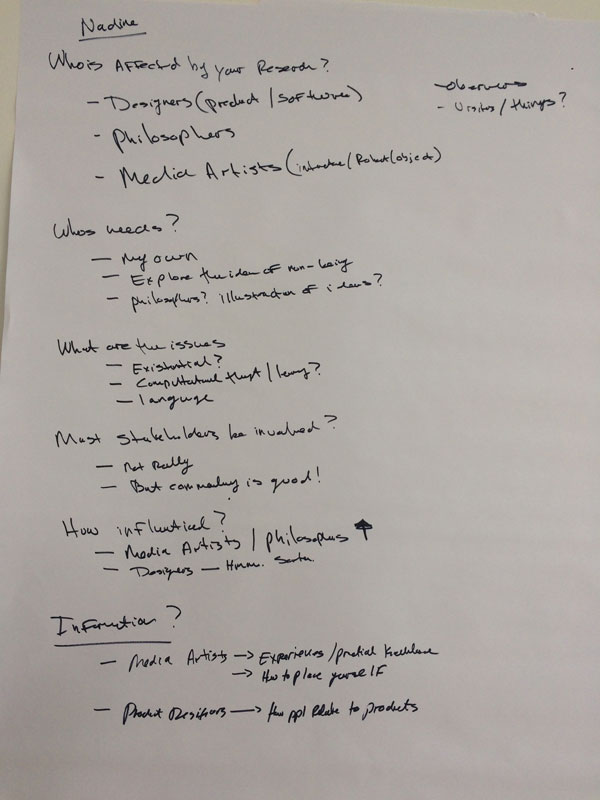

Just some scenarios built and thoughts from CFC.

Just some scenarios built and thoughts from CFC.